How To Automate Data Science Tasks Using Python (Part 3)

In Part 3: It is about "Outlier detection" and handling them.

Here we go again! Have you read the previous two parts of this series?

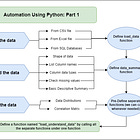

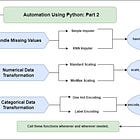

In those articles, I demonstrated how to use Python to automate tasks such as data loading, basic summarization, missing value handling, and data transformation.

If you haven’t already, consider reading these articles.

As a result, you should have an easier time following this part of the guide.

Do you recall the main motto of this series?

If you do, I would appreciate it if you could comment below.

Here’s a reminder of the motto:

If any tasks in your project appear repetitive or redundant. Always define a function and automate your task.

So in this article, we’ll look at another important aspect of data preprocessing. Here, we will learn how to automate both the process of detecting and handling outliers.

#Ad: I would love it if you check out my eBooks later to support me:

Personal INTERVIEW Ready “SQL” CheatSheet

Top 50+ ChatGPT Personas for Custom Instructions

Personal INTERVIEW Ready “Statistics” Cornell Notes

Get free data science & AI eBooks: https://codewarepam.gumroad.com/

What are Outliers and Why is it important to address them?

Assuming you have sales data, you need to analyze the average sales you generate each month. During the process, you learned that the average sales for October deviate significantly from the rest.

Then, to figure out the reason, you examine the sales data for October. There, you have noticed that the sales amount for a specific day is wrongly recorded; it shows $100,000 when it should be $10,000.

This is what we call an outlier — These are the data points which is significantly different from the rest of the observations in your dataset.

The reasons for each outlier may differ, but it is critical to identify them.

You could wonder, “Why is it that important?”

Simple answer: It can have a significant impact on your analysis and model performance.

How?

First, as I stated in the example above, it can significantly skew statistical measurements such as mean and standard deviation.

In the case of building a model, it has a disproportionate influence on your models (especially linear ones).

Finally, it may signify errors in data collection or rare but significant events.

Now that you have a basic understanding of outliers and why they are important to detect, let us begin to automate the detection and handling processes.

Outlier Detection

There are three commonly used methods for detecting outliers. Namely:

Z-score method

Interquartile Range (IQR) method and,

Isolation Forest method

These methods are implemented depending on the nature of the data. From personal experience, these are my recommendations:

If the data distribution is normal, make use of the Z-score to identify outliers, as it is based on the mean and standard deviation.

And, if the data distribution is skewed, consider the IQR approach, which emphasizes the middle 50% of the data.

Finally, if the data is high-dimensional with many features, use “Isolation Forest” because it handles the curse of dimensionality better than the other methods.

Z-score method

This method finds outliers based on the number of standard deviations a data point is from the mean.

How do we generally do this?

First, we start by selecting the numerical features:

columns = df.select_dtypes(include = ['number']).columnsNow that we’ve discovered the numerical features, we just use the function to determine the z-score for each column.

import numpy as np

from scipy import stats

z_scores = np.abs(stats.zscore(df['column']))In the z-score technique, after computing the z-score of each column using the empirical rule, it is considered an outlier if the score is greater than 3 or less than -3.

Why?

Approximately 68% of data falls within +-1 standard deviation, whereas 95% falls within +- 2.

Finally, 99.7% is within the +-3 standard deviation.

But, how can we automate the entire process of identifying the outliers?

We can create a function where we proceed as we did earlier and then form a dictionary where we store the outliers with their indices.

import numpy as np

from scipy import stats

def detect_outliers_zscore(df, threshold=3, columns=None):

columns = df.select_dtypes(include=['number']).columns if columns is None else columns

outliers = {}

for col in columns:

z_scores = np.abs(stats.zscore(df[col]))

outliers[col] = np.where(z_scores > threshold)[0].tolist()

return outliersInterquartile Range (IQR) Method:

This method detects outliers based on the data’s interquartile range.

But what is the interquartile range? — This is the difference between the first and third quartiles of the data. Hence, this method is handy because it is less vulnerable to extreme values than the other methods.

How should we proceed with the IQR approach in our Jupyter Notebook

After selecting the numerical columns as we did before, we calculate the Q1 and Q3 (quartiles):

Q1 = df['column'].quantile(0.25)

Q3 = df['column'].quantile(0.75)Now that we have both Q1 and Q3, we are going to calculate the IQR (range).

IQR = Q3 - Q1Finally, this technique requires a lower and upper bound to determine outliers. So, an outlier is a value that is less than or greater than the lower or upper bounds.

But how do we calculate the lower and upper bounds? — Well, there’s a formula. Like:

lower_bound = Q1 - 1.5 * IQR

upper_bound = Q3 + 1.5 * IQRAre you wondering why 1.5? (As I did when I first learned about it.)

The IQR method’s multiplier of 1.5 is based on empirical observations that closely match the properties of a normal distribution. This multiplier successfully captures a range that includes the majority of data points while detecting those that differ significantly from the central tendency.

Now, let’s combine all of these into a function to automate the entire process and simply call it whenever needed.

def detect_outliers_iqr(df, columns=None):

columns = df.select_dtypes(include=['number']).columns if columns is None else columns

outliers = {}

for col in columns:

Q1 = df[col].quantile(0.25)

Q3 = df[col].quantile(0.75)

IQR = Q3 - Q1

lower_bound = Q1 - 1.5 * IQR

upper_bound = Q3 + 1.5 * IQR

outliers[col] = df[(df[col] < lower_bound) | (df[col] > upper_bound)].index.tolist()

return outliersIsolation Forest Method:

The isolation forest method is an unsupervised method that isolates anomalies in a dataset.

How does the isolation forest method work?

This approach, like the others, begins by calculating an anomaly score. In the isolation forest approach, “1” denotes normal and “-1” indicates anomalous. Hence, “-1” represents an outlier.

Normally, we do this in this way:

As always, separate the numerical columns first, then construct an isolation forest object and begin predicting the anomaly scores.

It goes somewhat like this:

from sklearn.ensemble import IsolationForestiso_forest = IsolationForest(contamination = 0.01, random_state = 42)

outliers = iso_forest.fit_predict(df['column'])And, to automate this process, the logic goes something like this:

from sklearn.ensemble import IsolationForest

def detect_outliers_isolation_forest(df, columns=None, contamination=0.01, random_state=42):

columns = df.select_dtypes(include=['number']).columns if columns is None else columns

iso_forest = IsolationForest(contamination=contamination, random_state=random_state)

outliers = iso_forest.fit_predict(df[columns])

outlier_indices = np.where(outliers == -1)[0] #(the threshold)

return outlier_indices.tolist()Handling the Outliers Found

Now that you’ve identified the outliers, based on the needs, you must decide how to address them.

There are two popular techniques to handle outliers.

Removing the outliers,

Capping the outliers.

I’m already presuming you understand what “removing the outliers” means. (Just dropping the outliers)

So, what exactly does “capping the outliers” mean? — Capping is a method for limiting the extreme values of data. Assume the data has an upper bound of 1000 and a lower bound of 100. If any figure exceeds 1000, such as 5000. The value will then be changed to the maximum (1000), and a value of 2 will become 100.

I recommend removing outliers only when they are the result of an error and are not part of the population, or when working with small datasets where each point is crucial.

Cap outliers only when they represent natural changes, when maintaining sample size is essential or when obtaining statistical robustness without deleting data.

To remove outliers, we normally do the following:

df_cleaned = df.drop()And, to cap the outliers:

df[col] = np.clip(df['column'], lower_bound, upper_bound)Now, let’s create a function to automate each of these tasks.

Remove the outliers:

def remove_outliers(df, outlier_indices):

df_cleaned = df.drop(index=outlier_indices)

return df_cleanedCapping Outliers:

We know how to calculate upper and lower bounds using both the IQR and Z-score methods. So, let’s define an IF/ELSE condition to include both methods in this function.

def cap_outliers(df, columns=None, method='iqr'):

columns = df.select_dtypes(include=['number']).columns if columns is None else columns

for col in columns:

if method == 'iqr':

Q1 = df[col].quantile(0.25)

Q3 = df[col].quantile(0.75)

IQR = Q3 - Q1

lower_bound = Q1 - 1.5 * IQR

upper_bound = Q3 + 1.5 * IQR

elif method == 'zscore':

z_scores = np.abs(stats.zscore(df[col]))

lower_bound = df[col].mean() - 3 * df[col].std()

upper_bound = df[col].mean() + 3 * df[col].std()

else:

raise ValueError("Unsupported method. Choose 'iqr' or 'zscore'.")

df[col] = np.clip(df[col], lower_bound, upper_bound)

return dfWrapping it up and putting it all together

Well, we have created all of the different functions required to automate “outlier detection and handling”. As we did in earlier parts, it is now time to merge all of these functions into a single function so that we can simply call it whenever we need to conduct all of the operations.

Are you ready?

Let us call this function: handle_outliers

def handle_outliers(df, detection_method='zscore', handling_method='cap', **kwargs):

if detection_method == 'zscore':

outliers = detect_outliers_zscore(df, **kwargs)

elif detection_method == 'iqr':

outliers = detect_outliers_iqr(df, **kwargs)

elif detection_method == 'isolation_forest':

outliers = detect_outliers_isolation_forest(df, **kwargs)

else:

raise ValueError("Unsupported detection method. Choose 'zscore', 'iqr', or 'isolation_forest'.")

if handling_method == 'remove':

outlier_indices = [index for col_outliers in outliers.values() for index in col_outliers]

df_handled = remove_outliers(df, outlier_indices)

elif handling_method == 'cap':

df_handled = cap_outliers(df, method=detection_method)

else:

raise ValueError("Unsupported handling method. Choose 'remove' or 'cap'.")

return df_handledIf you found this article useful, consider ❤️ liking this article, and you are also welcome to support me by tipping your desired amount here.

Finally, as I did here, develop an automation function for visualizing outliers in the comments section below.

This is your task. Will you take this challenge?

Connect: LinkedIn | Gumroad Shop | Medium | GitHub

Subscribe: Substack Newsletter | Appreciation Tip: Support